Vibe Coding The Design Process

What happens when coding agents move up the knowledge stack?

I’ve been vibe coding for a few years now — describing what I want to build in plain language and letting an AI agent figure out the how. For someone who spent decades leading technology teams with little formal coding experience, it was useful from the start. But as models improved and tools like Claude Code gave agents terminal-level access to entire development environments, it became something else entirely. The feedback loop between intent and working software collapsed from days to minutes. I was building things in afternoon sprints that would have taken teams weeks.

At some point the obvious question surfaced: what if the output isn’t software? What if you could vibe code any kind of knowledge work?

That’s the path I’ve been on for the past year — exploring what happens when the agentic approach that transformed coding gets applied to design and creative processes. This post is about what I’ve found.

From workflows to agents

To understand why this matters, it helps to clarify what makes an agent different from a standard AI workflow.

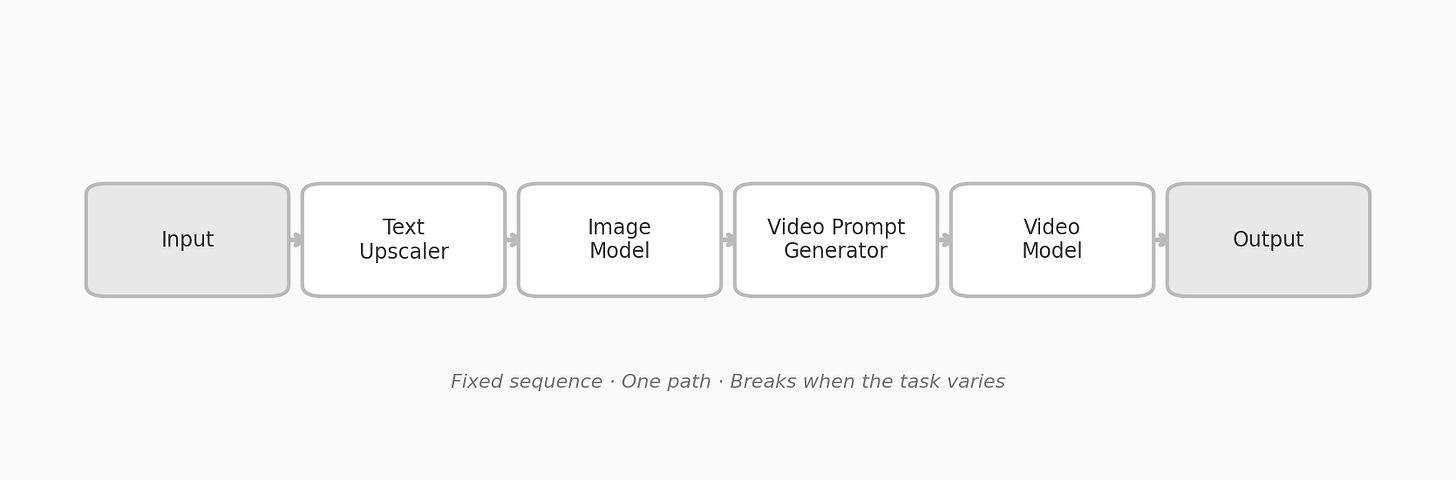

A traditional AI workflow is a fixed pipeline. Input goes in, passes through a predetermined sequence of models, and a finished artifact comes out the other end.

Deterministic Workflow

Fixed sequence. One path. Breaks when the task varies.

This works for repetitive tasks. But it breaks the moment the brief changes — and the brief always changes.

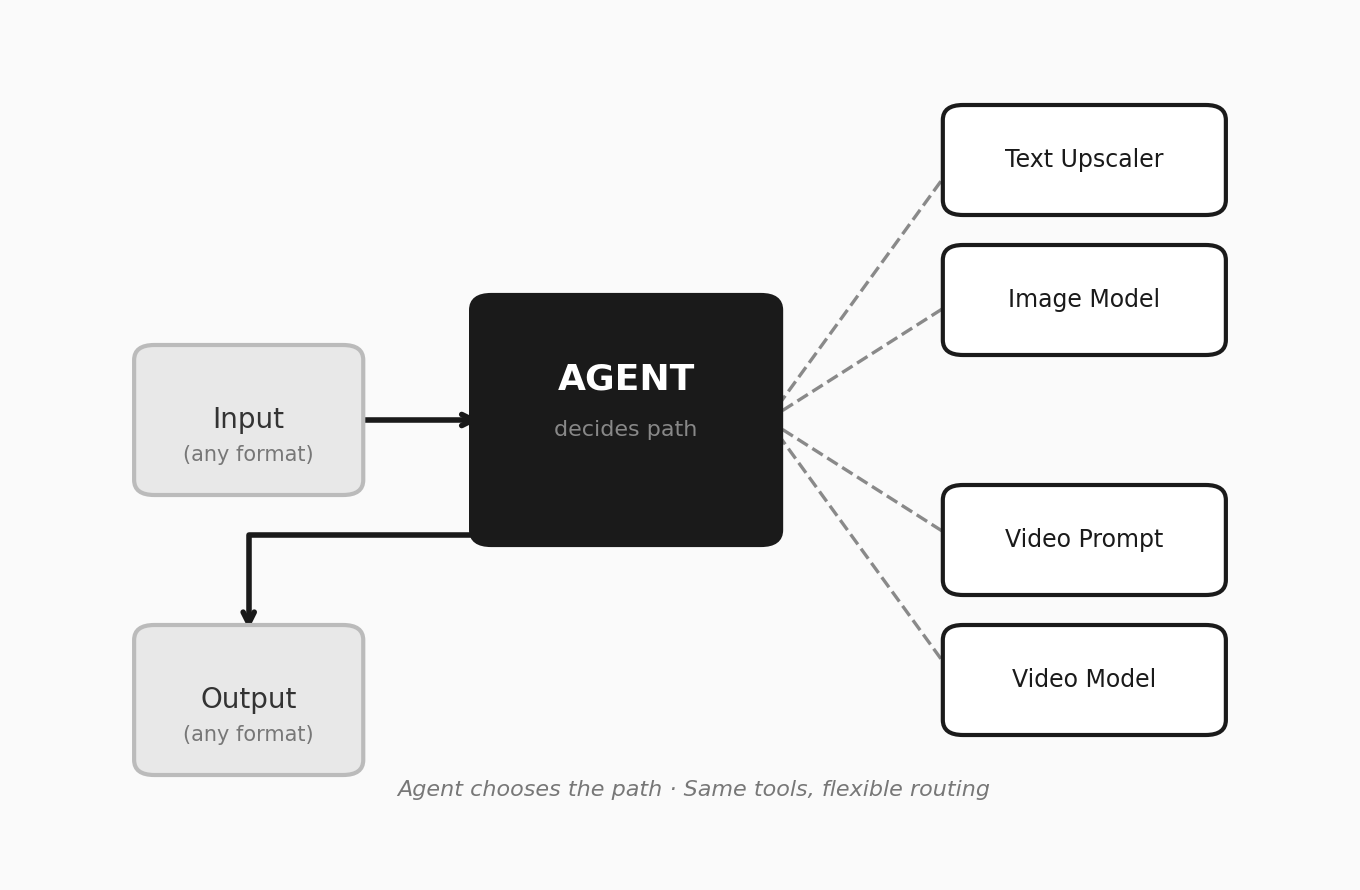

An agent operates differently. It receives a goal and decides which tools to use, in what order, and under what conditions.

Agentic Workflow

The agent chooses the path. Text→image, text→video, image→video all work without reprogramming the system.

Give a coding agent terminal access and it can install packages, write and test code, read documentation — anything a developer could do through a command line. This is why vibe coding works. The agent isn’t following a script. It’s navigating a problem space with access to real tools.

The question is what happens when agents move beyond the terminal — into the software where other kinds of knowledge work actually happen.

Vibe coding the design process

Anyone who has gone deep into vibe coding knows the pattern. You get the best results when you bring well-structured code, good libraries, and clear intent. The agent handles the execution at speed. You steer the direction. The output is a fusion of human judgment and machine capability that neither could produce alone.

The creative process works the same way.

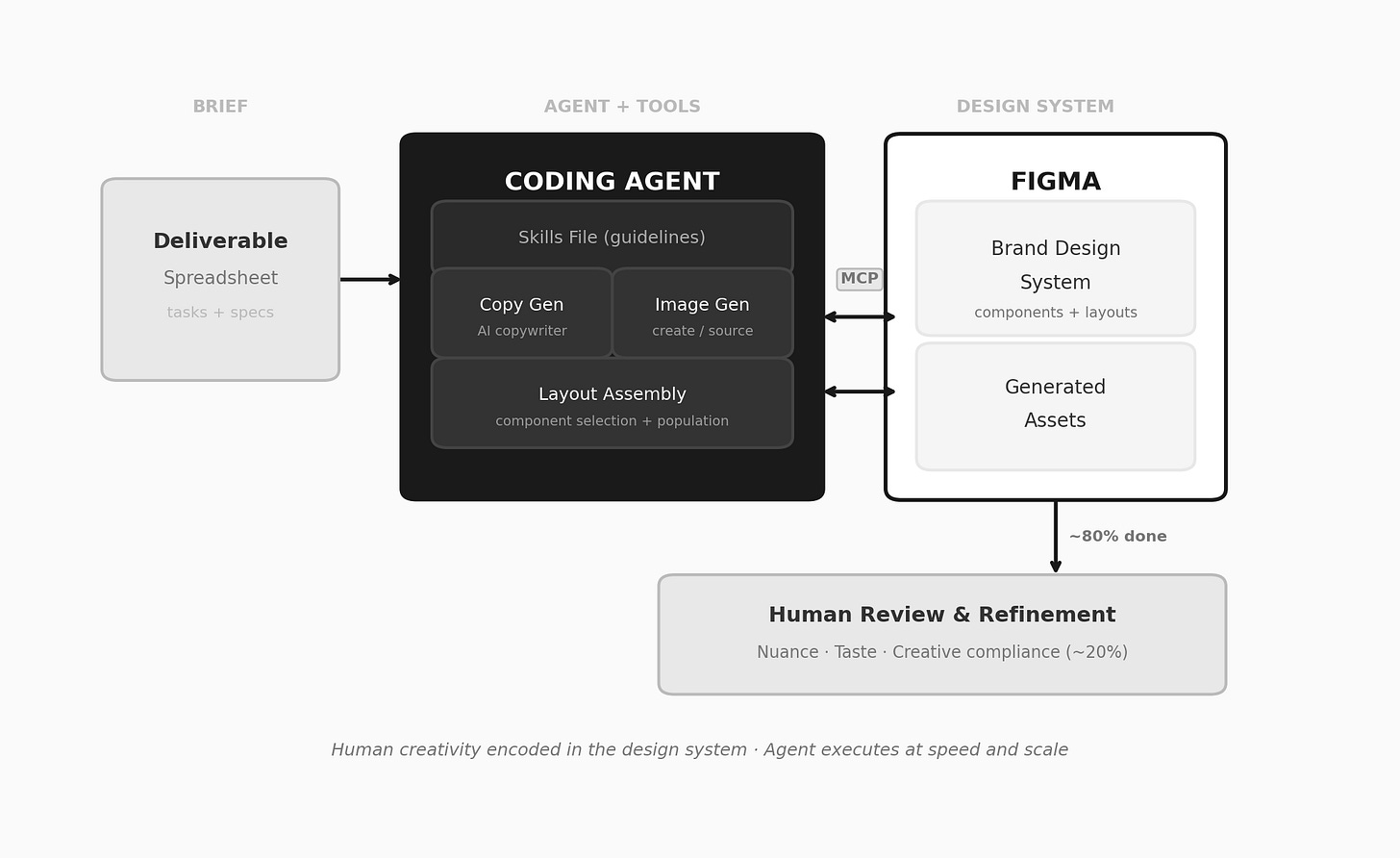

We’ve been connecting a coding agent to Figma — through a custom plugin that bridges the two using Figma’s MCP capability and design editing APIs. The agent can read file structures, understand components, manipulate layers, swap content, and adjust layouts. It operates Figma much like a human designer would — just faster.

Vibe coding production design using an Agent that operates Figma.

But the interesting part isn’t the tool access. It’s what happens when you pair the agent with a well-organized design system — a library of components with variable layouts, essentially the visual DNA of a brand — along with clear guidelines for how to use it. You’re merging human creativity, encoded in the design system, with an agent’s ability to execute at speed and scale. And with a human in the loop driving intention, the parallels to good vibe coding are exact: well-structured patterns, quality libraries, clear intent.

The agent doesn’t need to be creative. It needs to be literate in the system. And with the right documentation, it is.

In practice, this means those detailed spreadsheets of design requirements — the ones that used to take teams days of mechanical setup — can get fed to an agent that does 80% of the work for you. Because the output lives in Figma, a fundamentally collaborative tool, human teams step in for the remaining 20%: the nuance, the taste, the thing that makes creative work creative.

The Vibe Design Pipeline

AI handles setup and population. Humans step in for creative refinement — in the actual design tool.

Like vibe coding, this works best when there’s a high-quality foundation to begin with. The AI doesn’t replace the design system; it operates within it. The better the system, the better the output.

Beyond design

The same pattern applies wherever you can give an agent the right tools and a human expert to steer.

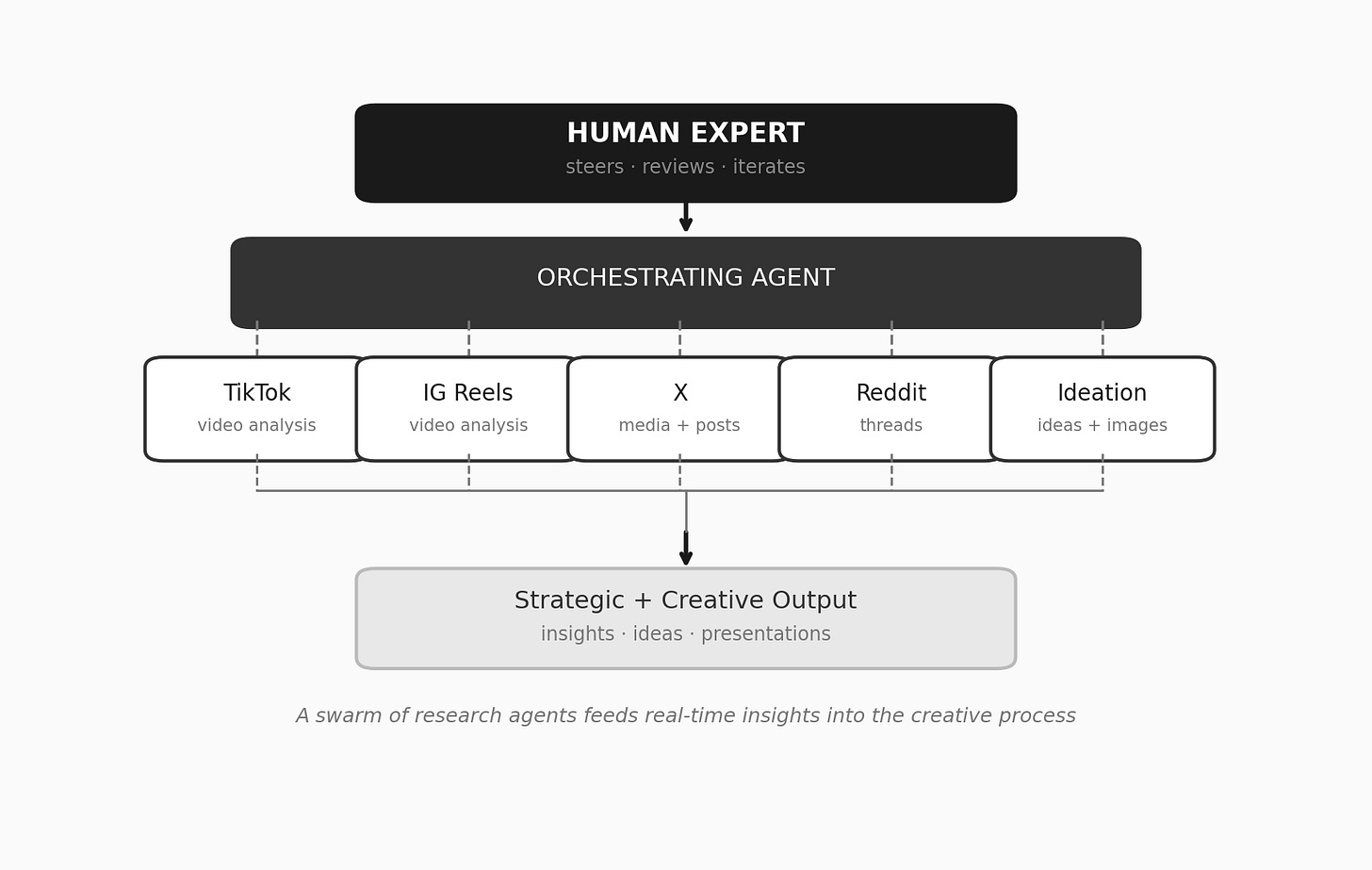

I've been vibe coding research reports by connecting my IDE to a swarm of MCP-based research tools — sub-agents that watch and analyze TikTok videos, Instagram Reels, X posts, and Reddit threads. For creative presentations, I point Cursor to a custom idea generator tool. The process is the same every time: connect the agent to the tools, pilot the session like I would a coding project, and steer the output toward something useful.

Agent Swarm Architecture

A swarm of research agents feeds real-time insights into the creative process.

What else can we vibe code? Financial models. Legal reviews. Sales decks. Training materials. Anything that involves a skilled human synthesizing information and producing a structured deliverable — which, if you think about it, describes most of what knowledge workers do all day.

Moving up the stack

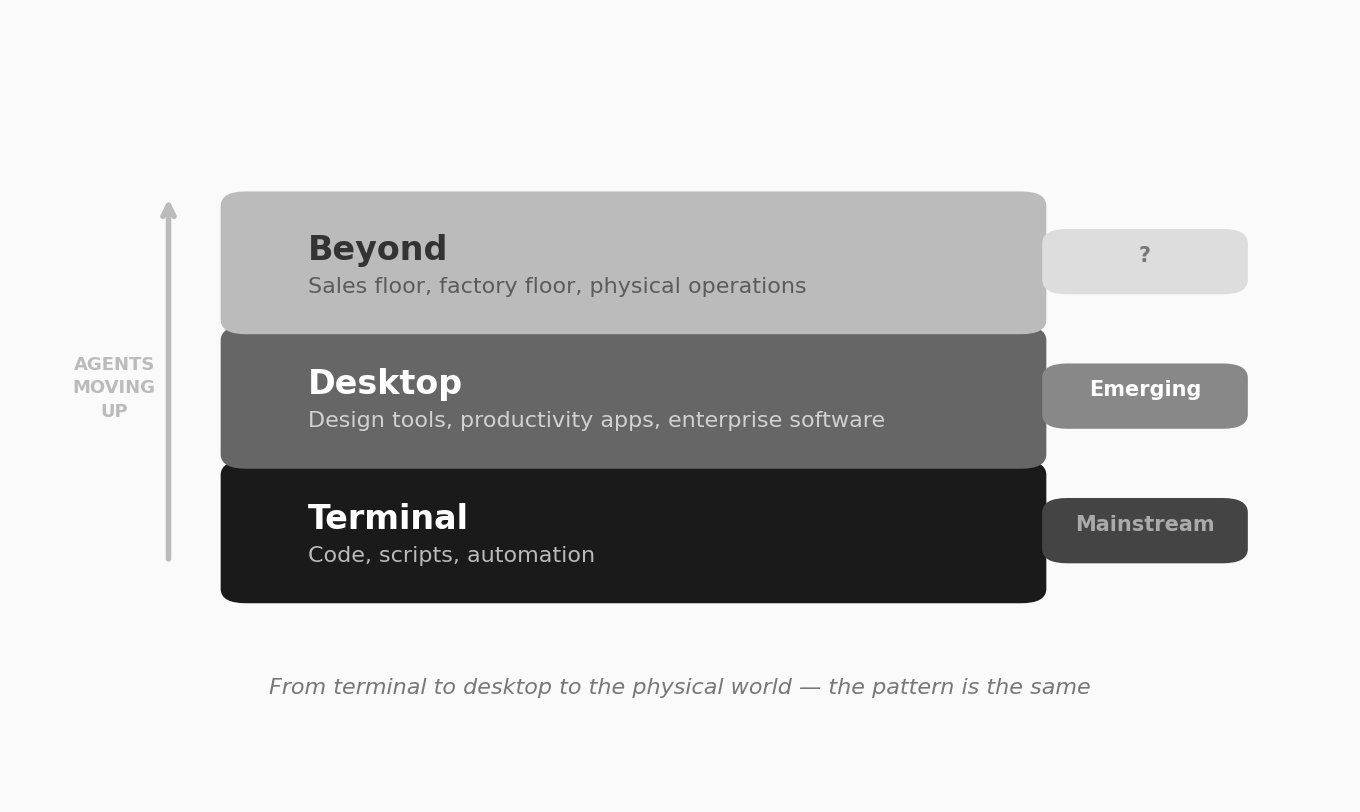

Coding agents built market fit in the terminal. They’re now moving to the desktop — operating Figma, browsing the web, navigating enterprise software, powered by an emerging suite of tools, and whatever custom MCP and skills files you give it. That’s the phase we’re in.

The next phase is harder to predict but easy to imagine. Agents on the sales floor, processing real-time customer signals and generating responses. Agents on the factory floor, interfacing with operational systems the way they currently interface with code editors. Agents embedded in any process where a human being currently sits between information and action.

The Knowledge Stack

Each step up the stack follows the same logic. Give the agent access to the tools. Give it clear guidelines. Put a human in the loop. The tools change. The pattern doesn’t.

We’re early. The workflows are rough and the tools will be dramatically better a year from now. But the underlying shift is structural, not incremental. A single person accompanied by a swarm of agents can now accomplish knowledge work that used to require entire teams. That gap will only widen.

Addition is R/GA’s applied Intelligence AI studio.

Visit Addition.ml to learn more.